Mounting pressure can be problematic. Frances Buontempo takes a step back and wonders if pressure is always a bad thing.

The news in the UK has told me everything is under pressure, and I know the feeling. I have been trying to write two conference talks, along with doing a variety of other tasks and there are just not enough hours in the day. Suffice it to say, I haven’t written an editorial, but instead thought long and hard about being under pressure. Much of this is of my own making and I must learn to say, “No” more often. Maybe the start of a new year is a good time to reflect and think about how to make changes.

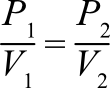

Mind you, pressure, in and of itself, is not a bad thing. We own a pressure cooker, which is useful for cooking beans and pulses. My hazy memory of physics from school tells me that the cooker is sealed, so keeps the volume fixed, meaning as the pressure increases when heat is supplied, the temperature also increases, cooking food more quickly than in an unsealed pan. The internet suggests this is related to Gay Lussac’s law, “the pressure of a gas varies directly with temperature when mass and volume are kept constant”, so that

for two pressures, P1, P2 and two volumes, V1, V2 [ChemTalk]. Similar physics means trying to boil a kettle up a mountain is somewhat difficult, or rather the water boils, but at a much lower temperature than some people would want. Since the atmospheric pressure is lower there, water boils below 100⁰, making a substandard cup of tea. Pressure is sometimes useful!

Running performance tests on a system can be illuminating. Stress or load tests simulate high traffic to a website, or service or similar, to see what happens. There is a subtle difference between them. A load test shows what happens under an expected load, meaning you know how long you expect a response or similar to take. In contrast, a stress test finds the upper limits of a system’s capacity, meaning you can prepare for a DDoS attack, or at least be aware of what might happen to your system [LoadNinja]. You can even run such tests as part of your CI pipeline, to keep an eye on timings and so on. Putting your system under pressure in a dev environment prepares you for production. In theory.

Knowing upper limits can be important. Big-oh notation is a fundamental part of many computer science courses. For once, that was not a typo, the technical term is big-o, but, like me, you might say uh-oh internally if someone asks what the space or time complexity of a specific algorithm is, when you haven’t thought about this for a long time. Nothing like the pressure of an interview or similar to make your brain seize up. Of course, big-o is short for order of approximation [Wikipedia]. It tells us how many calculations, or how much space, an algorithm might take in the worst case, giving a sense of how an algorithms scales with the item count. Roger Orr wrote an article a long while ago, looking at what can actually happen in real life [Orr14]. He reminded us that big-O “may ignore other factors, such as memory access costs that have become increasingly important in recent years.” Given the article was written in 2014, I wonder how different the results might look today. Feel free to re-read the article and run the test cases to see what happens now.

The runtime behaviour of a system can be hard to predict, and different architectures will have different runtime profiles. If a system appears to be under strain, you can try throwing more hardware at it, as the phrase goes. Not literally, of course. Now, we’ve all read, or are at least aware of, The Mythical Man Month by Fred Brooks, originally published in 1975. He shows that adding more people to a software project that is behind schedule is likely to delay it even longer. I wonder if adding more hardware to a system can have a similar effect. Changing the hardware can improve some performance measures. Certainly an SSD is likely to be quicker than a spinning disk, and probably use less energy and generate less heat. The SSD may not improve the performance of your linked list, though. And adding a second computer might make it take even longer to traverse the nodes of the list. Changing the data structure is more likely to improve performance. Adding more hardware can speed up calculations, provided you get the parallelism right. Some problems are embarrassingly parallel, in the sense that they do not need to communicate, which is often the bottleneck, both in the Mythical Man Month and many parallel algorithms. Others are not, which is why we often see multithreaded code run slower than equivalent single threaded code. The point about swapping to an SSD shows that changing a setup can make more difference than adding more of the same. In order to cope with pressure, finding a way to do things differently often helps. If a deadline is looming, the best approach might be to limit what gets delivered, so a minimum viable product (MVP). Many people have written about this, and an MVP is about more than doing the bare minimum. The Agile Alliance attributes the term to Eric Ries in his Lean Startup book [Agile Alliance], and emphasizes the MVP as the core piece of a strategy of experimentation. Finding a “version of a new product which allows a team to collect the maximum amount of validated learning about customers with the least effort,” means you get feedback quickly. This might mean teams “dramatically change a product that they deliver to their customers or abandon the product together based on feedback they receive from their customers.” Furthermore the MVP is supposed to reduce stress or pressure. As with many agile ideas, small baby steps FTW.

You cannot add more people to solve certain problems, such as taking an exam. You have a limited amount of time to prepare beforehand and a maximum amount of time in the examination itself. Though you can find friends to help you revise, you cannot parallelise that task, with three or four people learning different parts and taking a section of the exam each. Well, you could try, but the invigilator will almost certainly spot what you are doing. However, if a group of friends split up to learn different aspects of a subject, and reconvene to share what they have learnt, this can work. Each person will know some of the subject in depth and by explaining it to others may understand even better. Those they explain to might pick up some of the subset explained to them. Collaborating can take some of the pressure off, and give an arena to vent frustration in. Without a group of friends to help, you can choose to focus on a smaller subset yourself, and at least ensure you can answer some of the exam questions.

If you are building a software system with several moving parts, you might split the work up between teams. However you make the split, you need to make sure the whole system works when the pieces are glued together. I’ve seen this succeed when pieces from another team are mocked out; for example, a service returning hard coded results, until the database is in place, and so on. When this happens, the teams can program to an interface and be sure the parts will slot together. Without an initial discussion on the interfaces to use, trying to make the parts work together at the last minute often leads to trouble, late nights and fraught discussions. Some pressure is avoidable.

Pressure can lead to innovation, but so can the offer of a reward. King Oscar II of Sweden and Norway offered a prize in 1885 for a solution to the problem of determining the stability of the solar system. The question was, “Will the planets of the solar system continue forever in much the same arrangement as they do at present? Or could something dramatic happen, such as a planet being flung out of the solar system entirely or colliding with the Sun?” [Britannica].

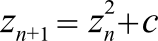

At the time, modelling the motion of two bodies was possible, but not three or more, let alone all of our solar system’s planets and moons. Poincaré won the prize, though he couldn’t fully solve the problem. His ideas lead to differential equations. Differential equations can be used to model all kinds of dynamical systems, and every now and then people find relatively simple looking equations that lead to very complex solutions. “Even when there is no hint of randomness in the equations, there can be genuine elements of randomness in the solutions.” [Britannica]. Now, whether the solutions exhibit genuine randomness is a big question. Certainly you might see one small change in initial conditions leading to a large change in outcomes. The recurrence relationship governing the familiar Mandelbrot set gives a clear example of this. The simple looking equation used is

where c is a complex number and z starts at 0. Any numbers which leave z bounded belong to the set. The boundary of the set is very complicated and if you zoom in, you start to see patterns repeating. This is often cited as an example of chaos. I am sure this is something very different to randomness. Surprising complex behaviour emerging from a simple model is deterministic, and I suspect randomness is often used synonymously with non-determinism. Radioactive decay is often regarded as random, in the sense that we are not able to predict when a specific atom will decay, even if we can be very precise about the half-life of a radioactive material. Einstein said “God does not play dice with the universe” in response to the probabilistic laws used in quantum mechanics. He did not like the idea of randomness as a fundamental feature of any theory. [Natarajan08]. He had other objections too, but that would require a digression into linear models and more maths and physics. The salient point is that sequences regarded as random do often have predictable properties. Whether anything is truly random is another matter. I shall attempt to broach this subject in my ACCU conference talk this year, if I ever finish my slides.

We’ve looked briefly at pressure and how it can have positive aspects. If people or systems are under too much pressure, they usually crack or fail in some way. Having knowledge on the upper bound that a setup can handle is useful, but if the environment strays towards that upper bound, trouble is on the way. That Poincaré won a prize for developing a new area of mathematics is marvellous. Sometimes curiosity and a carrot, rather than a stick, is a great motivator. If you are under pressure to complete something by a deadline, work extra hours, or do something else you can’t manage, learn to say “No”. I haven’t yet, but might give it a go this year.

References

[Agile Alliance] Minimal Viable Product (MVP): at https://www.agilealliance.org/glossary/mvp/

[Britannica] Dynamic systems theory and chaos: https://www.britannica.com/science/analysis-mathematics/Dynamical-systems-theory-and-chaos

[ChemTalk] Gay-Lussac’s Law: https://chemistrytalk.org/gay-lussacs-law/

[LoadNinja] https://loadninja.com/articles/load-stress-testing/

[Natarajan08] Vasant Natarajan (2008) ‘What Einstein meant when he said “God does not play dice…”’, Resonance, published July 2008, pp.655–660. Available online at: https://arxiv.org/ftp/arxiv/papers/1301/1301.1656.pdf

[Orr14] Roger Orr (2014) ‘Order Notation in Practice’, Overload, 22(124):14-20, December 2014. https://accu.org/journals/overload/22/124/overload124.pdf#page=15

[Wikipedia] Big O notation: https://en.wikipedia.org/wiki/Big_O_notation

has a BA in Maths + Philosophy, an MSc in Pure Maths and a PhD using AI and data mining. She's written a book about machine learning: Genetic Algorithms and Machine Learning for Programmers. She has been a programmer since the 90s, and learnt to program by reading the manual for her Dad’s BBC model B machine.

You can find details of Fran’s book, Genetic Algorithms and Machine Learning for Programmers, at https://pragprog.com/titles/fbmach/genetic-algorithms-and-machine-learning-for-programmers/